Insurance predictive analytics has moved into the core of claims and risk decisioning, yet production success remains uneven across carriers. The challenge is not model availability, but translating predictions into defensible, operational claim actions.

Building effective insurance predictive analytics models requires more than algorithm selection or accuracy optimization. Teams must balance statistical performance with interpretability, regulatory acceptance, and real-world claims behavior.

Below, we examine how predictive analytics models for insurance claims are built, operationalized, and governed in practice. We focus on modeling approaches, data requirements, and trade-offs that determine whether predictions survive deployment, scrutiny, and time.

Key Takeaways

- Predictive analytics in insurance claims relies on deliberate model selection, balancing GLMs and machine learning to meet accuracy, interpretability, and regulatory requirements.

- Effective claims prediction depends on data quality, historical depth, and stable labels, with unstructured data enhancing models only when treated as engineered features.

- Model outputs such as severity scores, risk segments, and probability estimates drive prioritization and portfolio insight, not automated claim decisions.

- Production success requires continuous monitoring through business-aligned KPIs, active drift detection, and disciplined performance governance.

- Auditability, ethical safeguards, and human oversight are essential to ensure predictive models remain defensible, trusted, and resilient over time.

Building Predictive Models: Core Methodology and Trade-offs

Predictive analytics models in insurance claims are shaped as much by regulatory, actuarial, and operational constraints as by statistical theory.

For analytics leaders and actuaries, modeling choices determine not only forecast accuracy but also regulatory defensibility and practical usability in claims environments. These decisions directly influence whether model outputs are trusted, adopted, and sustained in production.

Classical Foundations: The Role of Generalized Linear Models (GLMs)

Generalized Linear Models remain foundational to insurance predictive analytics because they align closely with established approaches to risk quantification. Their longevity in production reflects consistency with actuarial standards, rating manuals, and accepted loss modeling practices. GLMs integrate naturally with relativity structures, enabling insurers to explain how specific factors contribute to predicted outcomes.

Several characteristics explain why GLMs continue to anchor claims and risk models in regulated insurance environments:

- Interpretability: Model coefficients provide transparent, directional insight into how individual variables influence predicted outcomes, supporting actuarial validation and regulatory review.

- Stability: GLMs tend to maintain consistent performance over time and are less sensitive to transient noise in historical claim data.

- Regulatory acceptance: The traceability of inputs and outputs reduces audit friction and simplifies compliance with model governance requirements.

Despite these strengths, GLMs have well-defined limitations. They struggle to capture complex feature interactions and non-linear relationships without extensive manual feature engineering. As claim data becomes more heterogeneous, these constraints become more pronounced. GLMs are therefore best viewed as a robust, defensible baseline rather than a complete solution for all predictive use cases.

Advanced Techniques: Machine Learning Models for Enhanced Forecasting

However, machine learning introduces material risk in insurance settings. Techniques such as Random Forests, Gradient Boosting Machines (GBM), XGBoost, LightGBM, and neural networks can uncover non-linear patterns and complex feature interactions that GLMs cannot efficiently represent. These strengths are particularly valuable in high-dimensional claims datasets, where subtle or compounding risk signals may exist.

Despite these advantages, ML models also bring specific organizational and operational risks, including:

- Reduced explainability: Predictions are harder to trace for audit and regulatory review.

- Instability and overfitting: Models may perform well historically but degrade as claims patterns evolve.

- Governance challenges: Complex models demand rigorous monitoring, validation, and audit frameworks.

In practice, predictive analytics in insurance claims rarely relies on machine learning alone. Production environments commonly adopt ML-augmented approaches that preserve GLM-based structures while incorporating machine learning where it adds measurable value. These hybrid strategies reflect operational and regulatory realities rather than theoretical optimization.

The Predictability vs Interpretability Trade-off

The central tension in insurance claims modeling lies between predictive accuracy and interpretability. Advanced models may deliver incremental accuracy gains, but their opacity can limit adoption and increase regulatory risk. Actuarial and compliance stakeholders require clarity on how predictions are generated and how uncertainty is managed.

Model uncertainty is therefore a critical consideration. Confidence intervals, prediction volatility, and sensitivity to input variation influence whether outputs can responsibly support reserving, segmentation, or intervention decisions. A model that performs well on average but produces unstable or poorly explained predictions can create unacceptable risk exposure.

For these reasons, the most accurate model is not always the most appropriate choice. Trust, regulatory compliance, adoption, and operational risk often outweigh marginal improvements in predictive performance. These decisions are fundamentally business and governance judgments, distinguishing production-ready predictive analytics from experimental modeling.

In Summary:

- Predictive model selection in insurance balances accuracy, interpretability, and regulatory defensibility to ensure production adoption and sustained trust.

- GLMs remain foundational for their transparency, stability, and regulatory acceptance, serving as a robust baseline for risk modeling.

- Machine learning enhances predictive power but introduces explainability, instability, and governance challenges that require careful oversight.

- Hybrid approaches combining GLMs and ML strike a balance between predictive performance, interpretability, and operational practicality.

Data Engineering for Predictive Claim Models

High-quality, robust data is the foundation of effective predictive analytics in insurance claims. Models, whether GLMs or machine learning, can only perform as well as the features derived from structured and unstructured inputs. Understanding claim characteristics and ensuring the integrity of these inputs is essential for building defensible, production-ready models.

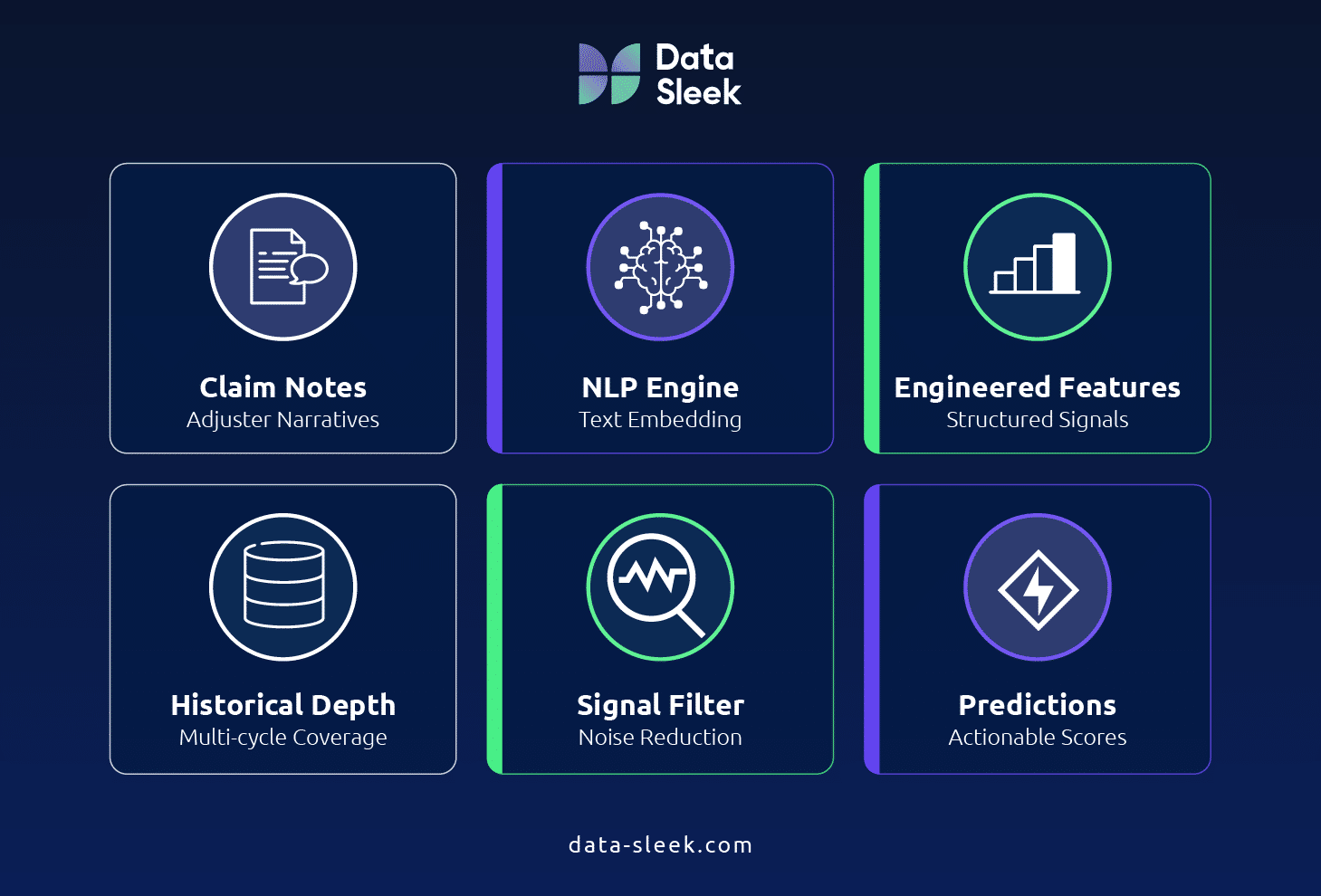

Leveraging Unstructured Data with NLP and Deep Learning

Natural Language Processing (NLP) serves as a sophisticated feature engineering layer, transforming qualitative claim narratives into actionable quantitative signals.

Rather than treating adjuster notes and claim commentary as inert data, NLP extracts patterns that highlight severity, fraud, and litigation risk. Techniques such as text embeddings enable predictive models to capture subtle early indicators, enhancing model precision without replacing structured data.

In practice, NLP enriches the feature set while maintaining alignment with regulatory and actuarial expectations. It is a tool for signal extraction rather than document automation and strengthens both classical and machine learning models by uncovering patterns that structured inputs alone cannot reveal.

Ensuring Data Integrity and Robust Inputs

Even the most sophisticated model cannot compensate for poor data quality. Reliable predictive analytics requires disciplined attention to:

- Historical depth: Data spans multiple economic cycles and catastrophic events to capture variability in claim outcomes.

- Label stability: Definitions of high severity, fraud, or other target events remain consistent over time.

- Signal vs. noise: Models focus on features with genuine predictive value rather than irrelevant data points.

- Data leakage: Training datasets must exclude information unavailable at the time of prediction to preserve model validity.

Ensuring these dimensions of data integrity protects model reliability, regulatory defensibility, and operational adoption. Insurers must be able to demonstrate control over data consistency, completeness, and timeliness through clearly defined data quality KPIs across the claims data pipeline. High-quality inputs are the cornerstone upon which actionable predictive insights are built.

In Summary:

- Predictive models rely on both structured and unstructured data with sufficient depth, stability, and reliability.

- NLP transforms qualitative narratives into quantitative features that reveal early indicators of severity, fraud, and litigation.

- Historical coverage, consistent labels, and careful management of signal vs. noise are essential for model validity.

- Data quality and integrity cannot be substituted by model sophistication; robust inputs are the foundation of defensible predictive analytics.

Operationalizing Predictive Models in Claims Management

Predictive analytics delivers value only when outputs are translated into actionable insights for claims decision-making. Outputs such as severity scores, risk tiers, and probability estimates guide prioritization, resource allocation, and operational focus.

Emphasizing decision-level impact ensures models improve efficiency, reduce leakage, and target high-impact claims while remaining auditable and defensible.

Mechanics of Severity Scoring and Risk Segmentation

Severity scoring ranks claims by predicted cost, complexity, or exposure, allowing insurers to prioritize adjuster effort where it matters most. Risk segmentation groups claims into tiers that clarify priorities, guide operational planning, and improve decision-making at both claim and portfolio levels.

Key benefits of segmentation include:

- Prioritization of high-impact claims: Focus adjuster attention on the most complex or high-severity claims.

- Leakage reduction: Identify claims likely to escalate, preventing avoidable losses.

- Cycle time improvement: Allocate resources efficiently to resolve low-risk claims faster.

This structure ensures predictive outputs are actionable, auditable, and aligned with business objectives without relying on workflow descriptions or SIU processes.

Core Predictive Outputs: Severity, Frequency, and Loss Ratio Forecasting

Predictive models generate outputs that directly inform decisions at both the claim level and portfolio level. These outputs help insurers anticipate financial impact, allocate resources, and manage risk proactively, without relying on workflow or process explanations.

Key outputs include:

- Probability scores: Likelihood of specific claim outcomes, guiding adjuster prioritization and operational decision-making.

- Dollar-value predictions: Estimated financial exposure per claim, supporting case funding and resource allocation.

- Case reserving implications: Provides recommended reserves per claim and aligns with portfolio-level financial forecasts.

- Level of insight:

- Individual claims: Inform adjuster decisions and day-to-day claim handling.

- Portfolio-level: Provide visibility into overall exposure, loss trends, and strategic resource planning.

By separating outputs into tactical (claim-level) and strategic (portfolio-level), insurers can translate predictive analytics into measurable operational and financial impact. This framework ensures that models are actionable, auditable, and aligned with business objectives, maximizing their utility across the claims organization.

Targeting High-Impact Claims: Litigation, Jumpers, and Fraud

Models identify claims with high probability of litigation, fraud, or unusual escalation, enabling early, cost-effective intervention. Predictive analytics emphasizes pattern recognition and signal-based risk flags rather than rule-based alerts, producing actionable insights early in the claim lifecycle.

Key points:

- Early-cycle prediction: Risk flags appear as soon as data is available.

- Signal-based risk indicators: Highlight claims with elevated severity, fraud potential, or litigation risk.

- Pattern recognition approaches: Detect subtle combinations of features indicative of high-risk claims

- Cost-effective intervention: Early identification allows proactive management, reducing potential losses compared with reactive handling

This approach ensures high-impact claims receive attention while maintaining auditability, regulatory compliance, and transparency.

In Summary:

- Severity scoring and risk segmentation prioritize high-impact claims, reduce leakage, and improve cycle times.

- Core predictive outputs include probability scores, dollar-value predictions, and case reserves at both claim and portfolio levels.

- Signal-based, early-cycle detection identifies high-risk claims, including fraud, litigation, and jumpers, enabling cost-effective intervention.

- Operationalization focuses on actionable insights, aligning predictive outputs with regulatory requirements, business objectives, and operational realities.

Deployment, Monitoring, and Governance of AI Models

Predictive models deliver value only when properly deployed, continuously monitored, and rigorously governed. Deployment is not the endpoint; ongoing oversight ensures models remain accurate, ethical, and compliant. Effective governance balances performance tracking, auditability, and risk mitigation, enabling executives and operational teams to trust and act on model outputs.

KPIs for Ongoing Model Performance

Monitoring focuses on both statistical performance and business impact. Tracking key KPIs ensures models remain actionable, reliable, and resilient as real-world claim patterns change.

Key metrics include:

- Alert Rate: Percentage of claims flagged for review.

- Qualification Rate: Proportion of flagged claims confirmed as relevant upon human review.

- Acceptance / Investigation Rate: Share of flagged claims escalated for further handling.

- Impact / Transformation Rate: Measurable reduction in loss costs, cycle times, or operational inefficiencies resulting from model-driven decisions.

- Model Drift Indicators: Detect changes in claim patterns that could degrade model performance over time.

These metrics tie directly to operational outcomes and ensure models remain actionable, auditable, and strategically aligned.

Auditability and Transparency in Predictive Models

Insurance models must be fully traceable and explainable because regulators and internal auditors expect clear evidence of how predictions were generated, how inputs influenced outcomes, and how decisions remain defensible when data limitations materially affect AI-driven conclusions.

Key considerations:

- Regulatory expectations: Models must be defensible according to actuarial standards and established insurance data governance frameworks.

- Traceability challenges: ML and hybrid models require mechanisms to show how inputs map to outputs.

- Explainability techniques: High-level methods like SHAP or LIME clarify model reasoning without overwhelming stakeholders.

- Non-negotiable auditability: Traceable and transparent models are essential for compliance, internal reviews, and external audits.

This ensures models are not black boxes, supporting adoption, trust, and regulatory compliance.

Ethical AI and Risk Mitigation

Models must operate fairly, reliably, and without exploitation. Ethical AI safeguards protect both the organization and policyholders while maintaining confidence in predictive analytics.

Key focus areas:

- Bias in training data and model design: Detect and mitigate historical or systemic bias.

- Incorruptibility: Prevent manipulation of the model by external or internal actors.

- Accountability frameworks: Define ownership and responsibility for model outputs and decisions.

- Human oversight: Critical claims decisions, including high-impact claims, require human review.

Ethical deployment ensures models are fair, defensible, and aligned with corporate governance, mitigating both operational and reputational risk.

In Summary:

- KPIs such as alert rate, qualification rate, and model drift indicators ensure continuous, outcome-driven monitoring.

- Auditability and transparency provide traceability, explainability, and compliance with regulatory expectations.

- Ethical AI safeguards mitigate bias and manipulation risks while ensuring accountability and human oversight.

- Continuous oversight transforms predictive models from technical outputs into reliable, measurable business tools.

Conclusion: Turning Predictive Models into Measurable Claims Impact

The success of predictive analytics in insurance claims is not determined by algorithmic complexity alone. True impact emerges from well-prepared data, disciplined model governance, and careful deployment, which together translate predictions into actionable insights. Without these pillars, even advanced models may fail to deliver reliable operational and financial outcomes.

Organizations must prioritize data readiness, monitoring for model drift, and ethical oversight, while balancing the trade-offs between predictability and interpretability. Deployment discipline ensures model outputs drive tactical claim decisions and portfolio-level insights, supporting efficiency, loss mitigation, and risk-aware decision-making.

To realize measurable value, insurers can assess claims analytics maturity, evaluate whether existing models are production-ready, and identify gaps in data quality, governance, or monitoring that limit predictive accuracy. Explore your organization’s predictive analytics readiness and discover where targeted improvements can deliver real-world claims impact.

For a structured perspective on your current approach, speak with an expert to assess your claims analytics maturity and identify the highest-impact opportunities.

Frequently Asked Questions (FAQ)

How are predictive analytics models used in insurance claims?

Predictive analytics models rank and segment claims by severity, frequency, and potential loss, guiding adjuster prioritization and resource allocation. They provide probability scores, dollar-value estimates, and risk flags for individual claims.

These outputs also inform portfolio-level insights, enabling claims leaders to identify patterns, forecast loss trends, and proactively address high-impact claims such as litigation, jumpers, or fraud.

Are machine learning models replacing GLMs in insurance?

No. GLMs remain foundational in insurance due to interpretability, regulatory acceptance, and alignment with actuarial practices. Machine learning is typically ML-augmented, enhancing predictions where GLMs face limitations.

ML models complement GLMs by identifying non-linear relationships and complex feature interactions, but most production environments use hybrid approaches that balance accuracy, interpretability, and compliance.

What data is required to build reliable claims prediction models?

Reliable models require high-quality structured and unstructured data, including historical claim records, adjuster notes, and claim narratives. Inputs must have sufficient historical depth, stable labels, and minimal noise.

Unstructured data can be leveraged via NLP to extract signals for severity, fraud, and litigation risk. The model’s predictive power depends on clean, representative, and consistent data rather than volume alone.

How early in the claim lifecycle can risk be predicted?

Risk can often be predicted at the first notice of loss (FNOL) using structured data and early-cycle narrative signals. Predictive outputs assign probability scores, severity estimates, and risk tiers immediately.

Early-cycle predictions enable proactive allocation of adjuster effort, faster resolution of low-risk claims, and early identification of high-impact claims, reducing potential leakage and improving cycle times.

How do insurers measure the success of predictive models?

Success is measured using KPIs such as alert rate, qualification rate, acceptance/investigation rate, impact/transformation rate, and model drift indicators. These metrics link model outputs directly to operational and financial outcomes.

Continuous monitoring ensures models remain accurate, relevant, and aligned with business objectives. Insights are evaluated at both the individual claim level and portfolio level for full organizational impact.

What are the regulatory risks of using AI in claims decisions?

Regulatory risks include lack of auditability, insufficient transparency, and failure to explain or justify automated decisions. Non-compliance can result in fines, reputational damage, and operational constraints.

Maintaining traceable, explainable models with robust governance frameworks mitigates these risks. High-level explainability techniques and human oversight ensure AI decisions remain defensible in audits or regulatory reviews.

Can predictive models reduce fraud without increasing false positives?

Yes. Predictive models identify high-risk claims using patterns and signals rather than rigid rules, allowing insurers to target potential fraud early. Probability scores help prioritize investigation efficiently.

The effectiveness depends on model quality and data integrity. Properly designed models minimize false positives while still detecting unusual or high-severity claims, balancing operational efficiency with risk mitigation.

Glossary

Predictive Analytics

The use of statistical and machine learning models to forecast future claim outcomes based on historical insurance data, informing operational and strategic decisions.

Generalized Linear Model (GLM)

A statistical modeling framework widely used in insurance for pricing, risk estimation, and claims forecasting, providing interpretability and regulatory defensibility.

Gradient Boosting Machine (GBM)

An ensemble machine learning technique that iteratively combines weak prediction models to improve accuracy, often used for claims severity and fraud prediction.

Severity Scoring

A model output estimating the potential cost or complexity of a claim, used to prioritize adjuster effort and inform case reserving.

Model Drift

The degradation of a model’s predictive performance over time caused by shifts in underlying data patterns, claim types, or external conditions.

Interpretability

The degree to which a model’s predictions can be understood and explained, particularly regarding how input features influence outputs.

Incorruptibility

The resistance of a model to manipulation or exploitation, ensuring predictions cannot be intentionally altered or gamed by internal or external actors.