Best For

• Real-time analytics on IoT, mobile, or application logs

• User behavior analytics (clickstreams, engagement tracking)

• Semi-structured operational data

• Location-based analytics and logistics apps

• Applications requiring schema flexibility + low-latency reads

Real-time analytics play a crucial role in various industries, allowing organizations to gain immediate insights and act upon them. Real-time analytics databases are essential for industries requiring immediate insights, such as finance and e-commerce. However, choosing the best database for real-time analytics can be daunting.

This blog post will guide you through the top databases, compare relational and NoSQL databases, and discuss the key factors to consider when selecting the best database for real-time analytics needs. Real-time analytics is one of several critical emerging data trends that business leaders must prepare for as organizations increasingly demand instant insights for competitive decision-making.

ClickHouse, Apache Druid, Singlestore, Couchbase, and Apache Pinot are considered among the best databases for real-time analytics due to their low query latency and high performance.

Analytics Database Summary

- Real-time analytics databases enable businesses to access and analyze data quickly and accurately, considering scalability, speed, data type support & budget constraints.

- Real-time analytics databases are optimized for analytical queries and can deliver sub-second queries for large and complex datasets, enabling fast and efficient data-driven decision-making.

- Popular real-time analytics databases include Clickhouse, Singlestore, Druid, and Apache Pinot

- Use cases for real-time analytics range from observability to fraud prevention & process optimization.

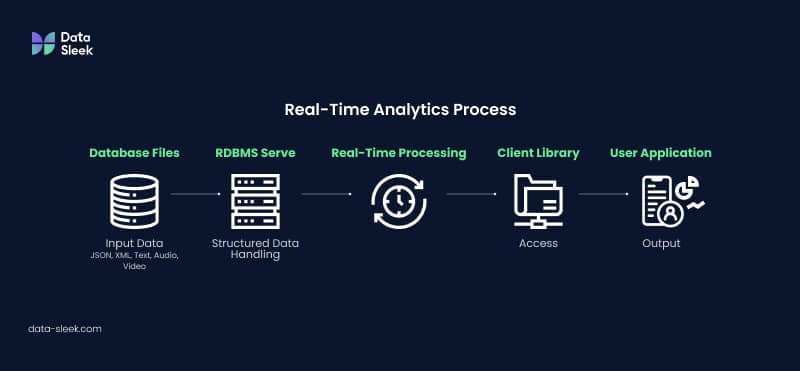

Understanding Real-Time Analytics

Real-time analytics is the process of gathering, examining, and responding to data immediately, allowing businesses to store data and analyze it in real time. This capability is essential for companies like Netflix and Prime Video, as they rely on real-time databases to deliver seamless user experiences.

Real-time analytics databases are designed for high-velocity data streams and must support high ingestion throughput to handle millions of events per second, ensuring data freshness.

But how do you choose the right database for your real-time analytics needs? The essential elements to consider when selecting a database for real-time analytics are scalability, speed, data type support, and budget limitations. Real-time analytics databases often utilize columnar storage to optimize query performance and provide low-latency responses for time-sensitive queries. These factors apply to both SQL and NoSQL databases, which we will explore further in this post.

Traditional databases are optimized for transactional workloads, while real-time analytics databases are designed for high-velocity data streams and low-latency queries.

Key Factors To Consider For Best Database for Real-Time Analytics

This section will delve deeper into the essential elements mentioned earlier: scalability, speed, data type support, and budget constraints. Evaluating analytical workloads and query patterns is also crucial for selecting the right database, as these factors impact performance and architecture decisions.

Each factor is critical in determining the best database for your real-time analytics requirements. When considering budget constraints, it’s important to focus on cost efficiency. Decoupling compute and storage in real-time analytics databases allows for independent scaling of resources based on workload demands, optimizing both performance and costs.

High concurrency support is also essential for real-time analytics databases to handle multiple user requests simultaneously.

Let’s examine these factors and how they can influence your database selection.

Scalability

Scalability refers to a system’s ability to manage increasing volumes of data and users while maintaining satisfactory performance. When planning for scalability, it is essential to consider the number of users who will access the analytics system, as higher concurrency and a growing user base can significantly impact performance and necessitate additional planning.

Another critical aspect of database scalability is to choose the right database engine. Not every database are created equal. A perfect example is startup who uses MySQL or Postgres for analytics. Find out more about why some OLTP database are not mean for analytics, especially real time analytics. The same holds for search workloads — MySQL’s built-in full-text search struggles with scalability and multi-predicate queries, making it a poor fit for any application that needs fast, complex text retrieval at scale.

A scalable database is essential for businesses that require storing, processing, and analyzing large amounts of data. Scalability can be ensured by incorporating additional resources, optimizing the system architecture, or choosing a database with built-in stream processing capabilities. Efficient use of system resources and a massively parallel processing architecture are key for scaling analytical workloads in real-time analytics databases.

Achieving high performance at scale requires not just the right database engine, but a thoughtfully designed data architecture that aligns with your analytical workload, concurrency demands, and growth trajectory.

Scalable databases offer cost-effectiveness, efficiency, data-driven decision-making, cost reduction, competitiveness, and improved customer experience. Additionally, some databases provide an intuitive web UI for easier management and monitoring, which can further enhance the benefits of a scalable database.

Speed

Speed is essential in real-time analytics, providing businesses with access to up-to-date data and enabling swift queries, which in turn facilitate immediate action and proactive problem-solving. The fastest analytical databases are designed to deliver low query latency, often returning answers to complex queries in milliseconds, which is critical for real-time analytics. Real-time analytics can enhance business agility, campaign performance, and customer understanding through streaming analytics.. See how Data-Sleek Helped Numerade Scale Its EdTech Platform With a Modern Data Architecture

Streaming databases, capable of extracting, transforming, and loading millions of records in seconds, offer accelerated analysis and reduced development time. By opting for a streaming database, whether using a relational database management system (RDBMS) or a NoSQL database, you can effectively manage large volumes of streaming data for real-time analytics.

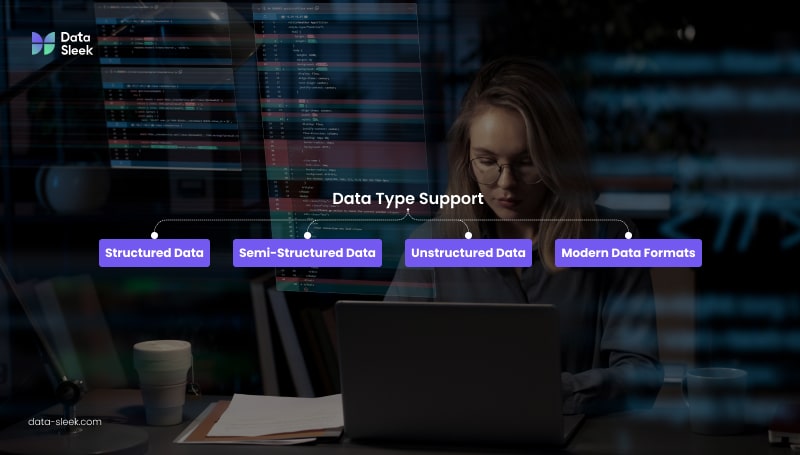

Data Type Support

Real-time analytics databases support various data types, including semi-structured and modern data formats, without implementing ETL processes. These databases can accommodate structured, semi-structured, and unstructured data, such as relational, JSON, XML, text, audio, and video. To enable fast and reliable data ingestion and analysis, real-time analytics databases must also seamlessly connect with various data sources, ensuring compatibility with multiple databases and data streams.

Data type support is essential in real-time analytics, facilitating efficient and accurate data analysis. The primary challenge of data type support in real-time analytics is ensuring that the data is stored in a format compatible with the database and that the database can process the data quickly and accurately.

Budget Constraints

Implementing real-time analytics can be costly due to resource restrictions, including computing power, specialized software, and expert personnel. The expense associated with implementing real-time analytics is contingent upon the type of resources required. Budget constraints may include the costs of procuring and sustaining computing power, specialized software, and knowledgeable personnel.

Cloud data warehouses offer scalable storage and compute options, which can help optimize costs and improve cost efficiency for real-time analytics.

Considering these budget constraints, you can make an informed decision on your organization’s most cost-effective and efficient real-time analytics database.

Are you struggling to keep up with the ever-changing data landscape? Do you wish you could harness the potential of real-time insights to make informed business decisions? Look no further! Data Sleek is here to help.

Data Ingestion and Processing for Real-Time Analytics

Efficient data ingestion and processing are at the heart of any high-performing real time analytics database. In today’s fast-paced business environment, organizations must be able to capture and process data from a wide variety of sources—ranging from transactional systems and IoT devices to user interactions and financial systems—without delay. This capability is essential for real time analytics, where the value of insights often depends on their immediacy.

Data ingestion refers to the process of collecting and importing data as it is generated, whether it’s streaming data from sensors, logs, or transactional records. Modern analytics databases are engineered to support real time data ingestion, allowing businesses to continuously feed fresh data into their systems. This ensures that analytics platforms can process data in near real time, supporting time analytics and enabling organizations to respond to events as they happen.

Once ingested, the ability to process data instantly is what sets real time analytics databases apart from traditional batch-oriented systems. These databases are optimized for low latency and high throughput, making it possible to analyze operational and financial data as soon as it arrives. This is particularly critical in financial systems, where sub-second decision-making can impact trading outcomes, risk management, and fraud detection.

By leveraging advanced data ingestion and processing capabilities, organizations can build real time dashboards, monitor business metrics, and gain meaningful insights from both structured and unstructured data. This empowers teams to act on the most current information, optimize business processes, and maintain a competitive edge in dynamic markets.

In summary, robust data ingestion and real time data processing are essential for any analytics database aiming to deliver accurate, timely, and actionable insights. Investing in these capabilities ensures that your data platform can keep pace with the demands of modern business and support a wide range of real time analytics workloads.

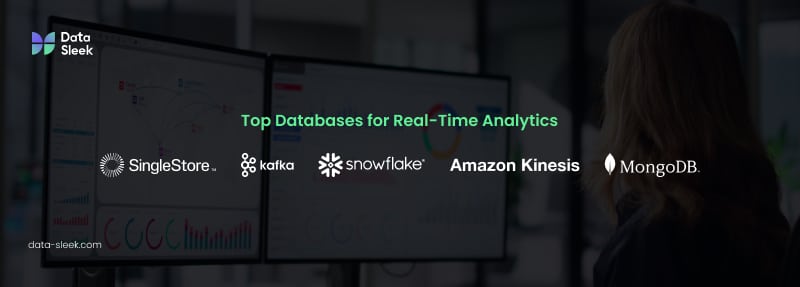

Best Databases for Real-Time Analytics in 2025

Now that we have discussed the key factors to consider when choosing a real-time analytics database let’s explore the top databases in the market: Clickhouse, Singlestore, Apache Pinot, Apache Druid, MongoDB, and Snowflake. These databases are designed to maintain high data freshness by supporting high write throughput and can efficiently process both operational data and historical data. They support data streams and include built-in tools for stream processing, such as windowing functions and real-time aggregations.

Furthermore, these databases often utilize columnar storage to reduce scan size and optimize analytical queries. Each database offers unique features and capabilities that make it suitable for real-time analytics, such as local cache, supports data buffering and streaming data, and is highly scalable.

In the following sections, we will examine each of these databases in greater detail.

Clickhouse — Best for Pure Real-Time OLAP

Why it’s one of the best databases for real-time analytics:

ClickHouse is purpose-built for extremely fast analytical queries on massive datasets. It uses a columnar storage engine, vectorized execution, and aggressive compression to deliver sub-second query latency even when scanning billions of rows.

Key Strengths

• Fastest OLAP performance in the market for real-time analytics workloads.

• High ingestion throughput (millions of rows per second).

• Ideal for dashboards, metrics, observability, and log analytics.

• Fault-tolerant distributed architecture.

• Supports SQL + materialized views for real-time transformations.

• Cloud-native managed service available (ClickHouse Cloud).

Best For

• Companies needing low-latency queries over huge datasets.

• Real-time metrics monitoring (e.g., infrastructure, product analytics).

• AI/ML feature stores requiring fast aggregations.

Limitations

• Not designed for heavy OLTP workloads.

• Requires thoughtful cluster sizing for optimal performance.

Bottom Line:

Clickhouse has become a strong contender in the real-time data analytics market. Clickhouse Cloud solution has worked hard in providing a managed and scalalable solution.

We recently used them for some real-time operational analytics and we were impressed with their features. ClickHouse efficiently utilizes system resources to deliver high database performance and supports analytical queries with sub-second query response times. ClickHouse is an OLAP database optimized for high ingestion throughput and is often used in environments requiring fault tolerance and data integrity. Real-time analytics databases like ClickHouse are designed to be fault-tolerant, ensuring data integrity and availability during failures.

We are “Clickhouse Developer” certified as we believe this real-time data analytics database will keep growing.

SingleStore — Best Hybrid OLTP + Real-Time Analytics

Why it’s one of the best databases for real-time analytics:

SingleStore (formerly MemSQL) is unique because it combines transactional (OLTP) and analytical (OLAP) capabilities in a single, distributed SQL engine. This makes it one of the best databases for real-time analytics when applications require both fast writes and fast queries. Its memory-first architecture, vectorized execution, and universal storage engine allow it to deliver millisecond-level latency for mixed workloads.

Key Strengths

• Hybrid OLTP + OLAP in a single database

• Low-latency analytical queries on fresh operational data

• Supports columnstore, rowstore, and in-memory tables

• MySQL wire protocol compatibility (easy migration and integration)

• Excellent for both real-time dashboards and transactional systems

• Built-in Pipelines for high-speed ingestion from Kafka, S3, and cloud sources

• Cloud-native and horizontally scalable

Best For

• Applications needing real-time insights directly from operational data

• Financial services, logistics, SaaS platforms needing analytics + transactions

• High-concurrency dashboards

• Low-latency machine learning inference or feature stores

Limitations

• More expensive than some open-source alternatives

• Requires well-designed resource allocation for optimal performance

Bottom Line:

SingleStore is the ideal hybrid database for combining OLTP performance with real-time analytics. Our SingleStore consulting services for smooth migration team can help you implement this powerful platform for your business needs. This architecture is perfect for businesses that need both workloads without duplicating data systems. We have performed some benchmarks between Clickhouse and Singlestore in the past, and so did Singlestore. For Single table type of queries, Clickhouse is very fast. However, performance degrades when you need to join multiple tables.

The largest table we’ve seen in Singlestore had more than 900 billion rows using 52TB of disk space (compressed). Queries were still performing with a P99 < 5 seconds, says Bala.

Apache Pinot — Best for User-Facing Analytics

Why it’s one of the best databases for real-time analytics:

Originally created at LinkedIn, Apache Pinot is optimized for sub-second queries powering user-facing analytics—think dashboards inside SaaS products, personalization engines, or recommendation systems.

Key Strengths

• Ultra-low query latency—even with high concurrency.

• Excellent for embedded analytics and customer-facing dashboards.

• Integrates seamlessly with Kafka, Kinesis, and Flink for streaming ingestion.

• Strong indexing: inverted, star-tree, range, sorted indexes for fast lookups.

• Supports real-time + offline data in a unified architecture.

Best For

• SaaS companies needing to serve millions of dashboard queries per day.

• Personalization, recommendations, and analytics APIs.

• Clickstream analytics with fresh, real-time data.

Limitations

• Operational complexity (multi-component system).

• More opinionated ingestion methods compared to Druid.

Bottom Line:

If your application needs real-time analytics exposed directly to customers, Apache Pinot is the best database for low-latency user-facing workloads.

Apache Druid — Best for High-Ingestion Event Streams

Why it’s one of the best databases for real-time analytics:

Apache Druid is designed for heavy real-time ingestion, especially from event streams such as Kafka, logs, telemetry, and time-series data. It excels at workloads needing instant data freshness with scalable roll-up and pre-aggregation.

Key Strengths

• Handles massive ingestion rates with minimal latency.

• Optimized for time-series and event-based analytics.

• Hybrid architecture combining real-time, historical, and deep storage tiers.

• Supports approximate queries (HyperLogLog, Theta Sketches) for large datasets.

• Battle-tested in companies like Netflix, Airbnb, and Lyft.

Best For

• Clickstream analytics

• Application observability (logs, metrics, traces)

• IoT telemetry

• Fraud detection and anomaly monitoring

• Any system requiring fresh event data + fast queries

Limitations

• More operational overhead than managed services like ClickHouse Cloud.

• Queries are fast but not as fast as Pinot for user-facing, millisecond latency.

Bottom Line:

Apache Druid is the best choice for real-time analytics on high-volume event streams, especially when ingestion speed and time-series analysis are top priorities.

MongoDB — Best for Semi-Structured Real-Time Data

Why it’s one of the best databases for real-time analytics:

MongoDB excels when dealing with semi-structured or fast-changing JSON data, making it a strong candidate for real-time analytics in applications that ingest highly dynamic content. Its flexible schema, built-in aggregation pipeline, and horizontal sharding allow it to process real-time data at scale.

Key Strengths

• Optimized for semi-structured data (JSON/BSON)

• Built-in Aggregation Framework supports complex real-time transformations

• Horizontal sharding for large-scale event ingestion

• Strong change streams for event-driven architectures

• Ideal for embedded analytics inside applications

• Support for geospatial queries, time-series collections, and flexible indexing

Limitations

• Query speed can degrade on very large datasets without careful index and shard planning

• Not as fast as OLAP-optimized engines (e.g., ClickHouse or SingleStore) for heavy aggregations

Bottom Line:

MongoDB is the best database for real-time analytics on semi-structured or rapidly evolving data, especially when flexibility and developer agility are key.

Snowflake — Best Cloud Data Warehouse with Near Real-Time Capabilities

Why it’s one of the best databases for near real-time analytics:

Snowflake is not a pure real-time analytics engine, but it is the best cloud data warehouse for organizations needing near real-time analytics at scale. With separate compute/storage layers, automatic scalability, and powerful micro-partitioning, Snowflake delivers strong performance for frequent-refresh analytics.

Snowflake often powers dashboards updated every few seconds to minutes—not millisecond-level ingestion, but fast enough for BI and operational analytics in many enterprises.

Key Strengths

• Fully managed, highly scalable cloud warehouse

• Automatically optimizes queries using micro-partitioning and caching

• Ideal for large-scale, complex analytical workloads

• Concurrency scaling prevents performance bottlenecks

• Snowpipe enables continuous data ingestion (near real time)

• Easy integration with Fivetran, DBT, Kafka connectors, and ETL pipelines

• Strong governance and security built in

Best For

• Real-time pipelines where 1–60 second latency is acceptable

• Enterprise BI and operational reporting

• Large organizations needing massive concurrency

• AI/ML workloads integrated with large cloud datasets

• Businesses running analytics across diverse sources in a centralized warehouse

Limitations

• Not suitable for millisecond-latency analytics

• Ingestion costs can rise at scale

• Not ideal for high-velocity streaming analytics without extra infrastructure (Kafka, Kinesis, etc.)

Bottom Line:

Snowflake is the best cloud data warehouse for near real-time analytics, offering scalability, simplicity, and strong performance—but it is not a replacement for true streaming-first databases.

Best Database for Real-Time Analytics: Side-by-Side Comparison Table (2026)

| Database | Best For | Key Strengths | Limitations | Bottom Line |

| ClickHouse | Pure real-time OLAP analytics | Fastest OLAP performance; high ingestion throughput; ideal for dashboards and observability; distributed architecture; supports SQL and materialized views; ClickHouse Cloud available. | Not built for OLTP; requires careful cluster sizing. | One of the fastest real-time OLAP databases; ideal for large-scale analytical workloads and operational analytics. |

| SingleStore | Hybrid OLTP + real-time analytics | OLTP + OLAP in one engine; millisecond-latency queries; supports columnstore/rowstore/in-memory tables; MySQL wire protocol; ingestion pipelines; horizontally scalable. | Higher cost; requires tuned resource allocation. | Best hybrid engine for combining OLTP + real-time OLAP; perfect for businesses wanting one unified system. |

| Apache Pinot | User-facing, sub-second real-time analytics | Ultra-low latency; high concurrency; strong indexing; integrates with Kafka/Kinesis/Flink; unified real-time + offline architecture. | Operational complexity; opinionated ingestion model. | Best solution for real-time analytics directly exposed to customers, such as SaaS dashboards and personalization engines. |

| Apache Druid | High-ingestion event streams | Massive ingestion throughput; optimized for time-series/event analytics; hybrid real-time + historical architecture; supports approximate queries; used by Netflix/Airbnb. | Higher operational overhead; slower than Pinot for UI-level latency. | Ideal for large-scale event streams, clickstreams, IoT telemetry, and fraud/anomaly detection. |

| MongoDB | Semi-structured real-time data | Great for JSON/BSON; strong aggregation pipeline; horizontal sharding; change streams; geospatial + time-series support. | Slower for heavy OLAP workloads; indexing/sharding complexity. | Great choice for real-time analytics on semi-structured or fast-changing data; highly flexible for developers. |

| Snowflake | Near real-time cloud analytics | Fully managed; highly scalable; micro-partitioning + caching; concurrency scaling; Snowpipe for continuous ingestion; strong ecosystem integrations. | Not millisecond-latency; ingestion cost at scale; not ideal for high-velocity streaming. | Best cloud warehouse for near real-time analytics; excellent for enterprise BI but not a true streaming-first engine. |

Why Real-Time Analytics Is Essential for Big Data and BI

Real-time analytics has become a cornerstone of big data analytics and modern business intelligence (BI) strategies. As organizations generate massive volumes of data, the ability to run an analytical query and receive insights instantly is critical for timely decision-making. Real-time analytics databases must support thousands of concurrent requests, even on complex analytical queries, to deliver timely results. These databases are designed to process both operational data and historical data, often integrating with data lakes for scalable analytics and efficient querying.

This level of responsiveness is particularly vital for complex project execution; for example, construction data warehousing enables instant access to job costing, schedule variance, and change order status across all active projects. Additionally, real-time analytics databases must ensure data consistency and integrity across distributed systems without delay. Unlike traditional batch processing, real-time analytics empowers teams to detect trends, respond to events, and optimize operations as they happen—giving businesses a competitive edge in dynamic markets.

- Warehouse and inventory management: Companies implement a real-time and unified pricing and inventory management system, which ensures consistency across central warehouses, store warehouses, and store shelves. The system automatically triggered replenishment or promotions based on real-time stock levels and sales data. The transactional database underneath that real-time layer is governed by a different set of trade-offs, since it has to handle every stock write before any analytics workload sees the data.

- Order fulfillment and logistics systems: Today, these systems often rely on real-time analytics to break down incoming orders into actionable tasks, assigning them to staff based on factors like location, availability, and workload. These systems also coordinate product movement through distribution channels and optimize delivery routes using live data such as traffic conditions, order volume, and driver proximity—ensuring speed, efficiency, and accuracy in last-mile delivery.

- Fresh supply chain monitoring: Some systems monitored fresh produce in real time using Wi-Fi cameras, temperature sensors, and GPS to ensure product quality and fast delivery.

How To Use Real-Time Analytics to Stop Ransomware Attacks

Ralph Aceves, CEO and Co-Founder of HackerStrike, leverages real-time analytics to combat ransomware and cyber fraud. HackerStrike’s platform detects anomalies indicative of ransomware activity by continuously monitoring device behavior and employing AI-driven analysis. This proactive approach enables the system to identify and block threats before they can encrypt data, effectively preventing attacks in both cloud and on-premise environments. Real-time analytics databases must be fault-tolerant and ensure data integrity to maintain continuous protection against threats. Processing streaming data and data flows is essential for real-time security analytics, enabling instant detection and response. These databases should also provide recovery mechanisms to ensure data availability. Aceves emphasizes that traditional security measures often fall short against rapidly evolving cyber threats, highlighting the necessity for real-time, intelligent solutions in modern cybersecurity strategies.

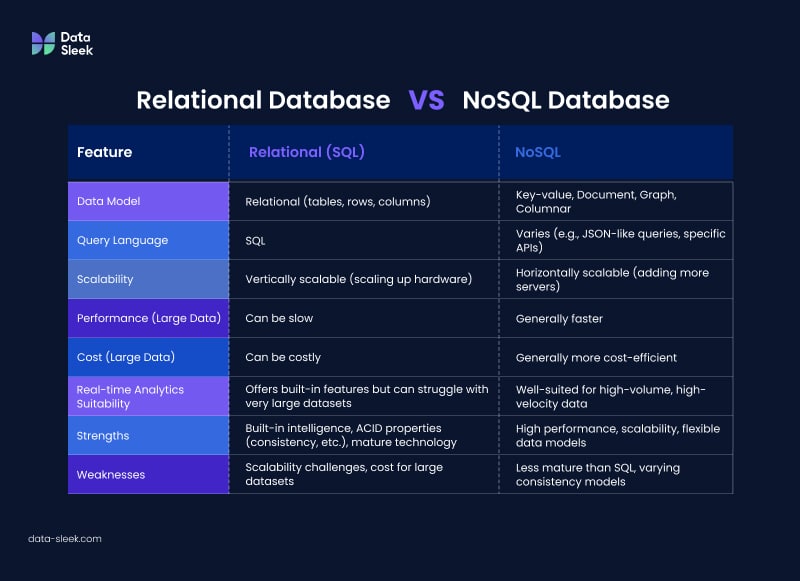

Comparing Relational vs. NoSQL Databases for Real-Time Analytics

Relational databases, such as PostgreSQL, Microsoft SQL Server, and Oracle Database, are based on the relational model and utilize Structured Query Language (SQL) to interact with the database. While they offer built-in intelligence, scalability, and availability for real-time analytics, traditional relational databases and traditional databases are not inherently designed for real-time analytics and often rely on batch processing, which can create bottlenecks and increase latency for real-time applications. For example, traditional relational databases like Postgres or MySQL excel at complex historical queries but often struggle with real-time responsiveness, scalability, and efficient stream processing without extensions or specific optimizations.

It’s important to distinguish between OLAP databases, which are optimized for analytical queries and low-latency data processing, and online transaction processing (OLTP) systems, which are designed primarily for handling transactions efficiently. Postgres, for instance, is primarily an OLTP database, which can lead to performance issues in analytics-focused applications.

On the other hand, NoSQL databases, like DynamoDB and MongoDB, are non-relational databases that employ different database engines that supports key-value, document, graph, and columnar. They offer in-memory caching, consistent latency, and automatic scaling, making them more suitable for handling large volumes of data quickly and cost-efficiently.

Real-time analytics capabilities can also be added to existing systems through extensions or enhancements to existing relational databases, enabling real-time streaming without a complete infrastructure overhaul.

In conclusion, both relational and NoSQL databases have their pros and cons when it comes to real-time analytics. The choice between the two depends on your organization’s specific needs, such as the types of data being processed, the required speed of analysis, and the budget constraints. By understanding the advantages and disadvantages of each database type, you can make an informed decision that best suits your real-time analytics requirements.

Integrating Real-Time Analytics with Existing Infrastructure

Real-time data integration refers to immediately processing and transferring data upon its collection, which can be accomplished through technologies such as change data capture (CDC) and transform-in-flight. These technologies enable organizations to enhance the accuracy, expedite decision-making, and improve the customer experience by providing immediate insights into customer data and transactions. Having complete control over the data stack and ensuring compatibility with existing systems is crucial for successful integration.

However, real-time data integration presents challenges such as latency, quality, and scalability. A well-designed data stack enables seamless real-time analytics and interoperability with existing infrastructure. By understanding and addressing these challenges, businesses can successfully integrate real-time analytics with their existing infrastructure and harness the power of real-time data.

Use Cases for Real-Time Analytics Databases

Real-time analytics databases are utilized in multiple industries, including e-commerce, finance, healthcare, and IoT. These databases offer various use cases, such as observability, user behavior, security and fraud analytics, IoT and telemetry, personalization and experience, fraud and error prevention, process optimization, preventive maintenance, regulatory compliance, credit scoring, financial trading, and detecting and blocking fraudulent transactions.

Embedded analytics and business intelligence are also key applications of real-time analytics databases, enabling integration with user-facing analytics platforms and tailored BI experiences. Real-time analytics databases are essential for industries requiring immediate insights, such as finance, logistics, and security. Organizations across various industries can gain valuable insights, streamline their processes, and improve their decision-making capabilities by leveraging real-time analytics databases.

For example, real-time analytics can help with regulatory compliance in the finance industry by providing immediate insights into customer data and transactions. It can also assist with credit scoring by providing instantaneous insights into customer creditworthiness, financial trading by providing direct insights into market trends and prices and detecting and blocking fraudulent transactions by providing immediate insights into suspicious activity.

By managing data and leveraging real-time analytics databases, businesses can address various challenges and capitalize on opportunities in their respective industries.

Summary

In conclusion, real-time analytics databases are critical in various industries, allowing businesses to gain immediate insights and make informed decisions. Choosing the correct database for real-time analytics can be challenging. Our database consulting team can help you select and implement the right database for your real-time analytics needs. Don’t hesitate to contact us if you have questions.

Still, by considering the critical factors of scalability, speed, data type support, and budget constraints, you can make an informed decision that best fits your organization’s needs. Additionally, database performance, data integrity, and support for analytical workloads and complex queries are critical factors in selecting the best database for real-time analytics. This blog post has provided an overview of the top analytics databases for real-time analytics, including Apache Kafka, Singlestore, Snowflake, and Amazon Kinesis, as well as a comparison between relational and NoSQL databases. With this knowledge, you are better able to select the most suitable database for your real-time analytics requirements and capitalize on real-time data opportunities.

Frequently Asked Questions

Which database is used for real-time applications?

Clickhouse and Singlestore databases are the best option for real-time applications as they offer many features that allow scalability and high data availability across multiple databases. With its Real Application Clustering, Oracle Database provides a reliable and secure environment to run critical applications.

What is the most widely used database for real-time analytics?

MongoDB used to be a great choice, but querying large data sets for real-time analytics is not ideal. We usually recommend Clickhouse or Singlestore, databases built for real-time analytics and AI. Check out our data challenges section under our data architecture consulting services, and don’t hesitate to contact us if you have questions.

Is SQL good for real-time data?

SQL is well-suited for real-time data analysis due to its efficient query language and wide acceptance among enterprise developers.

Which database engine is ideal for real-time analytics in memory?

Redis is great for real-time analytics in memory, as it boasts a highly scalable caching layer that ensures optimal performance and AI-based transaction scoring that simplifies fraud detection. However, Singlestore supports a memory engine and SQL and MySQL protocols, making it a more accessible solution.

What is database analytics?

Database analytics is managing, analyzing, and extracting insights from database data. It uses analytical tools and techniques to understand trends and relationships among large datasets. Organizations can gain valuable insights and make better decisions by leveraging database analytics.