Introduction

SageMaker provides multiple tools and functionalities to label, build, train and deploy machine learning models at a scale. One of the most popular ones is Notebooks Instances which are used to prepare and process data, write code to train models, deploy models to Amazon SageMaker hosting, and test or validate the models. I was recently working on a project which involved automating a SageMaker notebook.

There are multiple ways to deploy models in Sagemaker using Amazon Glue as described here and here. You can also deploy models using End Point API. What if you are not deploying the models, rather executing the script again and again? SageMaker does not have a direct way to automate this right now. Also, what if you want to shut down the notebook instance as soon as you are done executing the script? This will surely save you money given AWS charges on an-hourly basis for Notebook Instances.

Simplify Your ML Journey: Request a Free Consultation with Data Sleek to Explore the Benefits of Automating AWS Sagemaker and Take Your Machine Learning to the Next Level.

How do we achieve this?

Additional AWS features and services being used

- Lifecycle Configurations: A lifecycle configuration provides shell scripts that run only when you create the notebook instance or whenever you start one. They can be used to install packages or configure notebook instances.

- AWS CloudWatch: Amazon CloudWatch is a monitoring and observability service. It can be used to detect anomalous behavior in your environments, set alarms, visualize logs and metrics side by side and take automated actions.

- AWS Lambda: AWS Lambda lets you run code without provisioning or managing servers. You pay only for the compute time you consume — there is no charge when your code is not running.

Broad steps used to automate:

- Use CloudWatch to trigger the execution which calls a lambda function

- The lambda function starts the respective notebook instance.

- As soon as the notebook instance starts, the Lifecycle configuration gets triggered.

- The Lifecycle configuration executes the script and then shuts down the notebook instance.

Detailed Steps

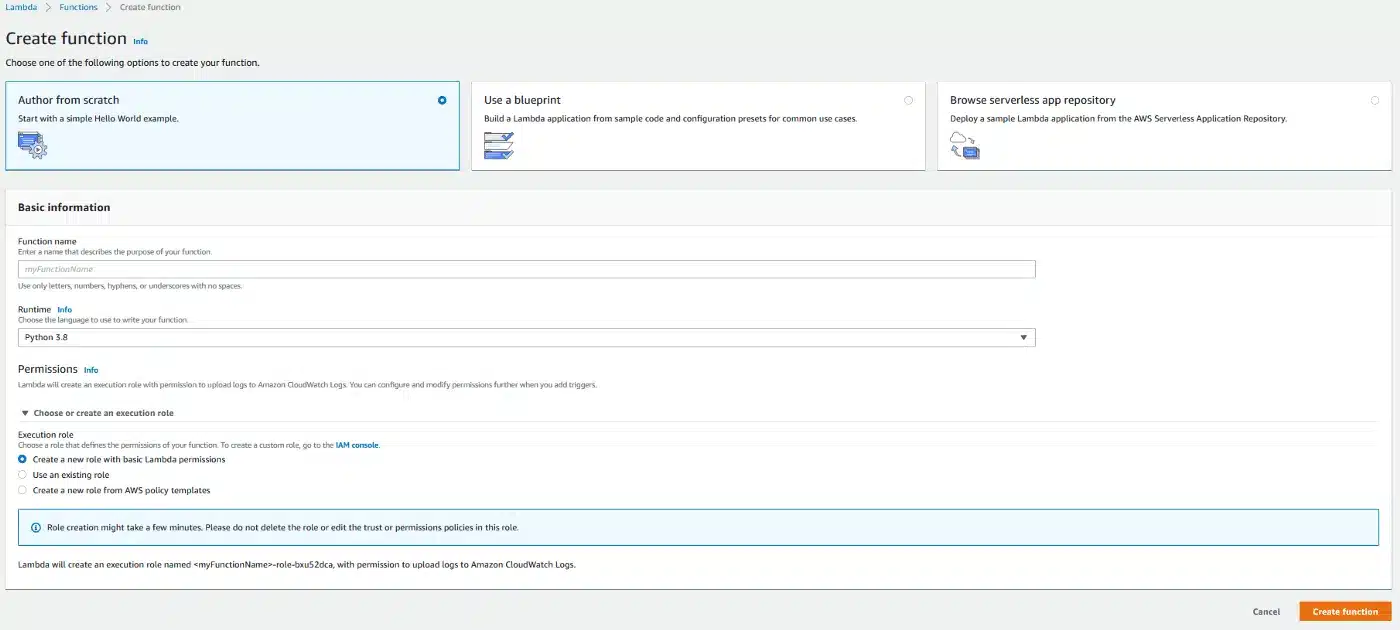

Lambda Function

We utilize the lambda function to start a notebook instance. Let’s say the lambda function is called ‘test-lambda-function’. Make sure to choose an execution role that has permissions to access both lambda and SageMaker.

Here ‘test-notebook-instance’ is the name of the notebook instance we want to automate.

#Starting a notebook instance

import boto3

import logging

def lambda_handler(event, context):

client = boto3.client('sagemaker')

client.start_notebook_instance(NotebookInstanceName='test-notebook-instance')

return 0

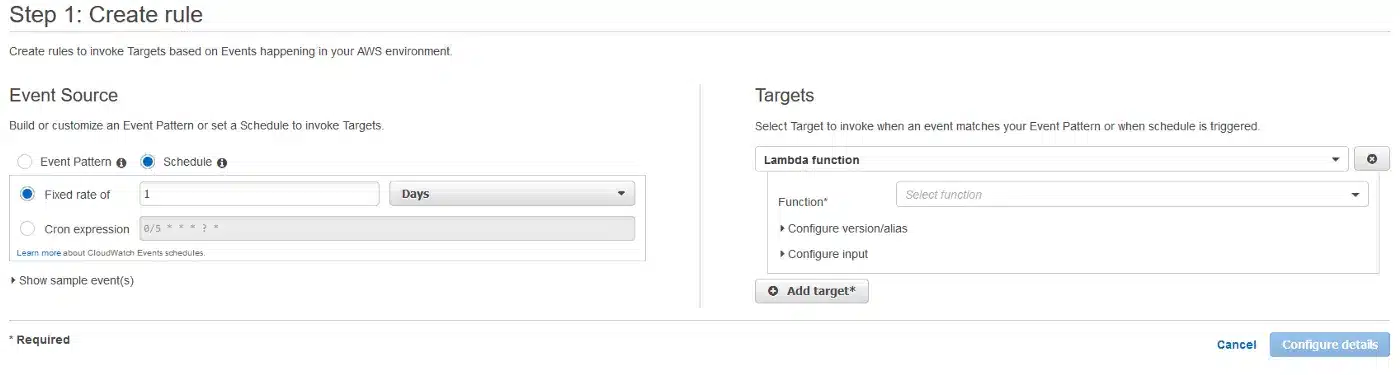

Cloudwatch

- Go to Rules > Create rule.

- Enter the frequency of refresh

- Choose the lambda function name: ‘test-lambda-function’. This is the same function we created above.

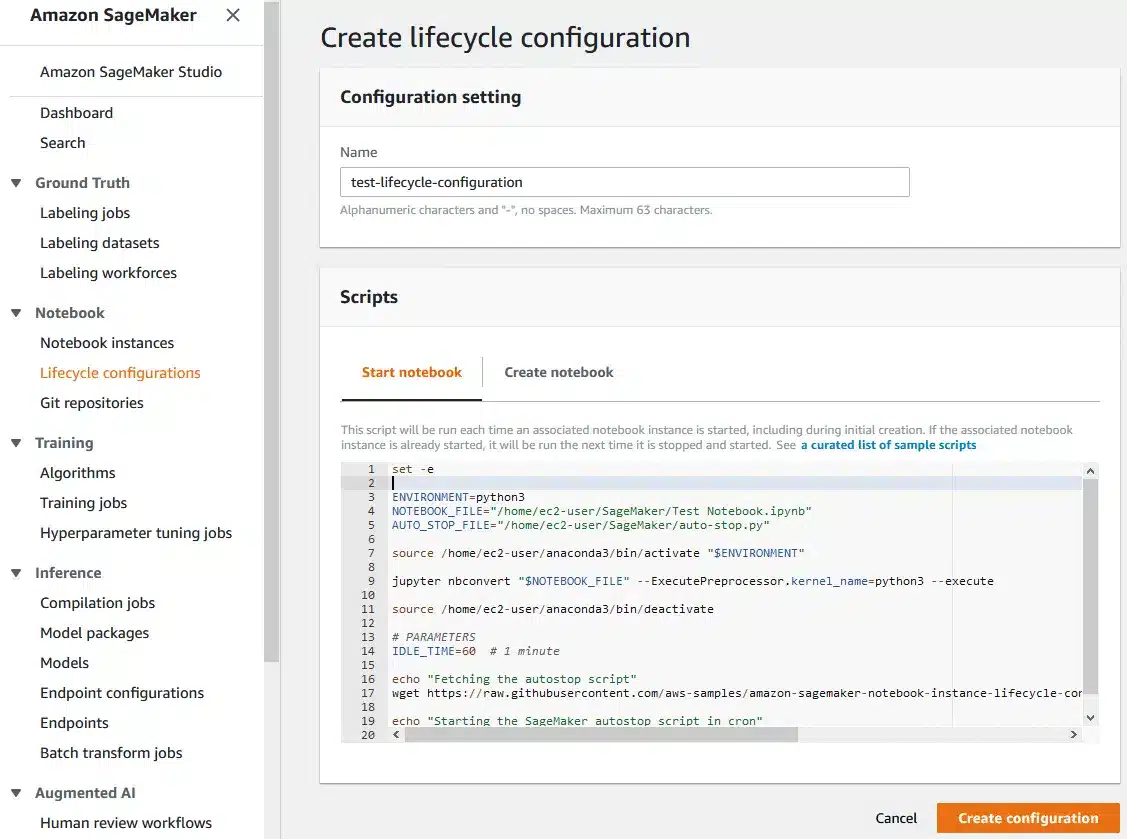

Lifecycle Configuration

We will now create a lifecycle configuration for our ‘test-notebook-instance’. Let us call this lifecycle configuration as ‘test-lifecycle-configuration’.

The code:

set -e ENVIRONMENT=python3 NOTEBOOK_FILE="/home/ec2-user/SageMaker/Test Notebook.ipynb" AUTO_STOP_FILE="/home/ec2-user/SageMaker/auto-stop.py" source /home/ec2-user/anaconda3/bin/activate "$ENVIRONMENT" jupyter nbconvert "$NOTEBOOK_FILE" --ExecutePreprocessor.kernel_name=python3 --execute source /home/ec2-user/anaconda3/bin/deactivate # PARAMETERS IDLE_TIME=60 # 1 minute echo "Fetching the autostop script" wget https://raw.githubusercontent.com/aws-samples/amazon-sagemaker-notebook-instance-lifecycle-config-samples/master/scripts/auto-stop-idle/autostop.py echo "Starting the SageMaker autostop script in cron" (crontab -l 2>/dev/null; echo "*/1 * * * * /usr/bin/python $PWD/autostop.py --time $IDLE_TIME --ignore-connections") | crontab -

- Brief explanation of what the code does:

- Start a python environment

- Execute the jupyter notebook

- Download an AWS sample python script containing auto-stop functionality

- Wait 1 minute. Could be increased or lowered as per requirement.

- Create a cron job to execute the auto-stop python script